Vehicles could be fitted with what they call an ‘Ethical Knob’, under a proposal by Giuseppe Contissa, Francesca Lagioia, and Giovanni Sartor of CIRSFID, at the University of Bologna, Italy. The device might help clarify ethical/legal issues with Autonomous Vehicles (AVs). What for example, should a self-driving car do when it ‘realizes’ (in an impending crash situation) that it could swerve to avoid a large group of pedestrians but in the process kill the driver and passengers?

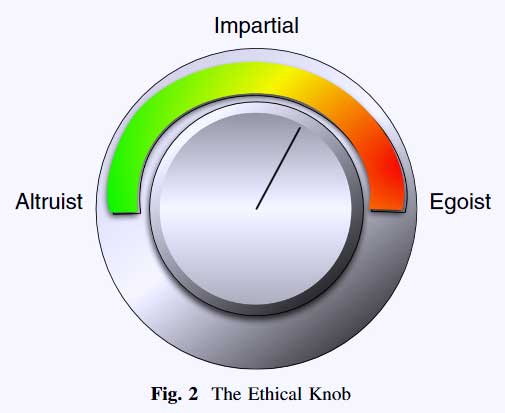

Before a journey starts, the driver could set the knob to Altruist | Impartial | Egoist (or anywhere in between) and the AV would take the appropriately-weighted action in the case of an emergency.

The authors point out that the idea of the Ethical Knob can be viewed either from a Utiliarian or a Rawlsian perspective:

“The Rawlsian approach could appear more acceptable if we assume that the disutilities being considered represent personal injuries having the same probability, but different gravity. For example, assume that 0,6l is the quantification of the damage from paraplegia (the loss of the use of both legs) and that 0,3l corresponds to the loss of the use of one leg. Assume that by proceeding the AV would cause with certainty the pedestrian to become paraplegic, while by swerving it would cause with certainty each one of three passers-by to lose one leg each. Then it might be argued—though this conclusion is very debatable—that swerving is preferable to proceeding, on grounds of equity/equality.”

See: The Ethical Knob: ethically-customisable automated vehicles and the law Artificial Intelligence and Law, September 2017, Volume 25, Issue 3, pp 365–378.

Also see: from Giovanni Sartor : Why Lawyers Are Nice (or Nasty)